AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Zeplinfor windows12/28/2023 Globs are allowed.Ĭomma-separated list of jars to include on the driver and executor classpaths. The number of executors for static allocationĬomma-separated list of files to be placed in the working directory of each executor. The number of cores to use on each executorĮxecutor memory per worker instance. where SparkContext is initialized, in the same format as JVM memory strings with a size unit suffix ("k", "m", "g" or "t") (e.g. Number of cores to use for the driver process, only in cluster mode.Īmount of memory to use for the driver process, i.e. The deploy mode of Spark driver program, either "client" or "cluster", Which means to launch driver program locally ("client") or remotely ("cluster") on one of the nodes inside the cluster. For a list of additional properties, refer to Spark Available Properties. You can also set other Spark properties which are not listed in the table.

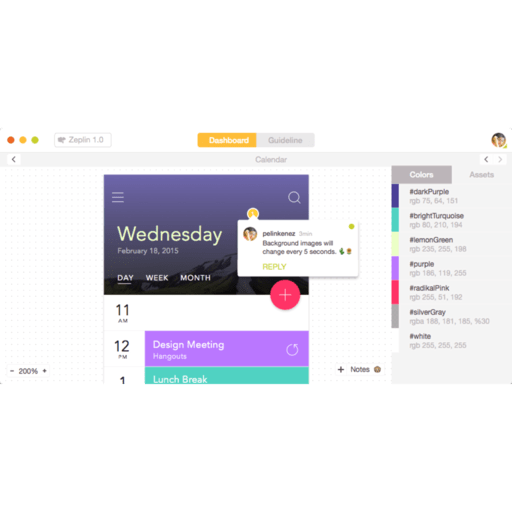

The Spark interpreter can be configured with properties provided by Zeppelin. p 4040:4040 is to expose Spark web ui, so that you can access Spark web ui via Configuration We only verify the spark local mode in Zeppelin docker, other modes may not work due to network issues. Here we download Spark 3.1.2 to /mnt/disk1/spark-3.1.2,Īnd we mount it to Zeppelin docker container and run the following command to start Zeppelin docker container.ĭocker run -u $(id -u ) -p 8080:8080 -p 4040:4040 -rm -v /mnt/disk1/spark-3.1.2:/opt/spark -e SPARK_HOME =/opt/spark -name zeppelin apache/zeppelin:0.10.0Īfter running the above command, you can open to play Spark in Zeppelin. Without any extra configuration, you can run most of tutorial notes under folder Spark Tutorial directly.įirst you need to download Spark, because there's no Spark binary distribution shipped with Zeppelin.Į.g. Including IPython and IRkernel prerequisites, so %spark.pyspark would use IPython and %spark.ir is enabled. Miniconda and lots of useful python and R libraries In the Zeppelin docker image, we have already installed You can not only submit Spark job via Zeppelin notebook UI, but also can do that via its rest api (You can use Zeppelin as Spark job server).įor beginner, we would suggest you to play Spark in Zeppelin docker. Multiple user can work in one Zeppelin instance without affecting each other.

You can visualize Spark Dataset/DataFrame vis Python/R's plotting libraries, and even you can make SparkR Shiny app in Zeppelin Interactive development user experience increase your productivity you can write Scala UDF and use it in PySpark Scala, SQL, Python, R are supported, besides that you can also collaborate across languages, e.g. You can run different Scala versions (2.10/2.11/2.12) of Spark in on Zeppelin instance You can run different versions of Spark in one Zeppelin instance Used to create R shiny app with SparkR support

Provides an R environment with SparkR support based on Jupyter IRKernel Provides an vanilla R environment with SparkR support NameĬreates a SparkContext/SparkSession and provides a Scala environment It provides high-level APIs in Java, Scala, Python and R, and an optimized engine that supports general execution graphs.Īpache Spark is supported in Zeppelin with Spark interpreter group which consists of following interpreters. Writing Helium Visualization: TransformationĪpache Spark is a fast and general-purpose cluster computing system.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed